Radio Receiver Design

John Wilson takes a lesson from history.

Phase Noise And Front Ends

As regards front end selectivity you will always find that a classic analog receiver with one or two tuned RF stages will beat the pants off a wide open front end, even with half octave filters fitted and for a perfect example take a look at the front end of an AR88.

I reviewed this dear old giant a few months back for Short Wave Magazine and was staggered by the sharpness of the RF stages. It turned in a second order intercept point that the latest receivers can't come near.

I should explain that I do my 2nd order measurements using signals at 6.5MHz and 7MHz, resolving the intermod product at 13.5MHz, because I decided that these frequencies represent real life situations and that is what I'm trying to explain to readers and users.

Your comment on the JRC NRD-545 receiver echo my own findings. There's such a simple test to check both phase noise and ultimate stop-band attenuation and that is to tune very slowly through a crystal oscillator signal from something like an elderly 100kHz calibrator.

The NRD-545 and most DSP receivers have a rumbly, grumbly noise floor extending up to 20kHz either side of the wanted signal with miscellaneous squeaks and whistles coming and going all the time.

Try doing that with your SP-600 and I bet you can't hear a thing until you whoosh into the edge of the oscillator signal.

Take a look at Ulrich Rohde's book on receiver design and check out the response characteristics of a DSP IF. There are spurious humps rising as high as -70dB relative to the filter passband and since your ear and the effects of the receiver AGC system raise these signals even higher, the result is noisy dissatisfaction for the listener.

AM Audio Processing Takes A Toll On Receiver Testing

The 60% modulation depth for AM sensitivity measurements came about because of the increasing use of heavy audio processing by AM broadcasters in order to make their stations sound LOUDER to the guy with a transistor radio glued to his ear, or to people listening to AM radio in cars.

The system most often found over here in Europe is called Optimod and deliberately processes (and of course distorts) the audio feeds to transmitters to such an extent that if you take a spectrum plot of a station using Optimod, you find wall-to-wall sidebands extending to the very edge of the station's allocated frequency space, in Europe MW that means +/- 4.5kHz.

Another feature of Optimod for AM broadcasters is that negative-going audio peaks are compressed whilst positive going peaks are expanded.

This allows the modulation depth to be greater than 100%, usually 110% on postive audio swings but at the same time prevents the negative-going swing from going to carrier zero.

So, you have what to all intents and purposes is permanent 100% modulation.

Prior to Optimod, the average level of modulation on an AM broadcast signal was empirically set at 30%, so that no peak of audio would overmodulate the then linear AM systems and that is where the 30% test level originated.

I don't know who can claim to have first suggested a test level of 60% to more accurately represent real life but certainly we at Lowe and Radio Netherlands began using 60% many years ago.

SINAD Trouble - Receiver Sensitivity Revisited

SINAD (Signal Including Noise And Distortion) has been long used in the communications field to provide a more repeatable method of measuring receiver sensitivity for all comms modes including wide and narrow band FM, AM and SSB/CW.

The technique involves feeding receiver audio into an agc-controlled amplifier so that the same level is always applied to the rest of the measuring system, then knocking out the modulation fundamental (usually 1kHz) and accurately measuring what is left.

It's the same technique that you would find in a harmonic distorion analyser, except for the fact that the measured signal includes noise components as well.

12dB SINAD equates more or less to traditional 10dB S+N/N ratio, but the beauty of the SINAD technique is that the measurement can be made by a single connection to the audio output of a receiver and you can crank the modulated RF source up and down until you have 12dB SINAD.

Removing the 1kHz modulation in an AM measurement is the same as switching the modulation off. The second great advantage from a service engineer's point of view is that you can align a receiver for best SINAD performance by just watching a single meter and tweaking everything for best SINAD.

This is I suppose only true for narrow band communications systems and wouldn't help at all in aligning critically flat band pass filters, but for comms work it's great.

All modern audio test equipment (such as the 8903) includes SINAD measurement and I can find noise floor on SSB by simply dropping the signal generator level down until I have 3dB SINAD.

It's that easy. I first started using SINAD when I discovered an American company called Helper Instruments who make a little low-cost instrument called The Sinadder.

This is a small box, mains powered, with a large meter on the front and one BNC input jack. You just connect the jack to audio from the receiver and there you go. No tweaking, no range changing.

Intermodulation In HF Receivers

The trick being to balance the gain (and hence signal levels) in each stage with the bandwidth of signals that it is subject to. If filtering is done before enough gain stages then sensitivity suffers. If too much gain is applied before filtering then there is a greater possibility of overload and intermodulation.

In a typical dual conversion HF receiver there are two stages of filtering that affect the dynamic range (and IMD performance) of the receiver discounting, for the moment, any RF stage filtering.

Normally the dual conversion receiver up-converts the RF signal to the first IF which is typically between 40 and 80MHz. There the signal is filtered, usually with a crystal filter of 15 to 20kHz bandwidth, and amplified before being down-converted to the second IF which is normally below 2MHz, 455kHz being common. The receiver's main selective filtering is done in this second IF stage with bandwidths depending on reception mode - 2.2kHz is normal for SSB reception. The dual conversion arrangement is popular because it works well, providing good to excellent image rejection and good filter shape factors without using excessively expensive components.

Tuning is also straightforward requiring only one tuneable local oscillator (for the up-conversion to the first IF).

With a few exceptions most HF receivers have no selective RF stages (after all the up-conversion has diminished the image rejection circuit to a simple RF low-pass filter cutting off below the IF frequency) or include only switched sub-octave filtering which helps with even-order intermodulation but does nothing for odd-order effects. The signal losses through the filters are a problem in the sensitivity stakes anyway. This means that all of the receiver's circuit up to the first IF filter (called the roofing filter) sees the whole of, or at least a large part of, the RF signals coming from the antenna. After that filter the circuit only sees signals within about 20kHz of the tuned frequency up to the selective filter which then eliminates everything except the required audio bandwidth.

By choosing IMD test frequency separations appropriately it is possible to establish the performance of each section of the receiver. Separations greater than 50kHz mean that the test signals will be blocked by the roofing filter and so this tests the RF stages, the up-conversion mixer and any IF amplifier before the roofing filter. It is common for there to be little improvement in IP3 figures once the 50kHz separation is exceeded - this simply indicates the lack of any RF selectivity in a receiver. Testing at a 4 or 5kHz separation will allow both IMD test signals through the roofing filter virtually un-attenuated so the next stage of circuit up to the selective filter is tested. This includes the first IF amplifier, the down-conversion mixer and the first stage of the second IF amplifier.

Because these circuits have some gain (often a significant amount) the IP3 figure will be lower than for the 50kHz test. At test signal separations of a few hundred Hz the whole of the receiver is tested (assuming a 2kHz bandwidth) though the results of this test tends to reflect on the receiver's audio quality rather than its dynamic range.

In my opinion many receivers have too much gain before the selective filter, and the down-conversion mixer can cause severe IMD products when listening to signals close to strong stations. I have tested some receivers that give good +15 to +20dBm IP3 performance at 50kHz spacing but very poor results in the region of -30dBm at 5kHz spacing. Needless to say the 7030 is not designed like that, and the IF amplifiers and down-conversion mixer will return an IP3 of +12dBm. The price paid, of course, is in sensitivity, but even with 10dB of RF pre-amplification the close in IP3 should be better than 0dBm.

So what happens when the IMD test signal separation falls between the 5kHz and 50kHz values? Well I would expect a gradual transition of values as more of the test signal passes through the roofing filter with narrower frequency separations. And up to a point that is what happens, but some strange happenings can be observed along the way.

Intermodulation In Passive Components

They tested the receiver at 20kHz frequency spacing with the RF pre-amplifier switched on and obtained an IP3 result at around +12dBm which is some 12dB below the expected value. They had tested the receiver with an IMD product level at the noise floor, but because of the discrepancy with AOR's measurements continued the test at different signal levels and produced a graph of signal level vs. IMD product level.

This showed an unusual characteristic; the IMD product level rose quickly from the noise floor by about 5dB for a 1dB input change and then stayed more or less constant for a further 10dB change. At higher input signal levels it then started to follow the expected 1:3 slope which corresponded to an IP3 of +25dBm (more or less to specification). I repeated their tests and found similar strange effects although I was not able to duplicate the results exactly.

Detailed investigation showed that the effect was very frequency dependent (it did not occur at all at frequency separations above 25kHz) and was often inconsistent, giving different results on different test runs. A gradual elimination of components that may cause the effect pointed to the roofing filter being the guilty party and indeed slight warming or cooling of that part caused significant changes in the IMD level virtually confirming it as the culprit.

That IMD should occur in a crystal filter is not surprising. The design of the RF stages in the 7030 has already been changed after initial production because of IMD in the 1.7MHz high-pass / low-pass filter. In this case both inductors and capacitors were responsible for IMD products near the filter's cut-off frequency (where voltages and currents in the filter tend to be highest) and the problem was solved by specifying larger inductors and a different dielectric for the capacitors. The point is though that the IMD in these passive components behaved in the expected manner - the product level rose at 3 times the test signal level.

Whatever is happening in the roofing filter cannot be described with a standard polynomial transfer function - indeed the input / output characteristic must actually change with the applied signal level and as such its IMD performance cannot be characterised with an IP3 figure. Since the filter is a mechanical device - energy is transferred from the input to the output by way of physical motion - the best mechanism I can think of for the observed behaviour is some kind of rattle. If anyone with more knowledge of filter technology wants to offer explanations I am happy to listen.

To summarise the observations - the roofing filter produces IMD products when one or both of the test signals are in the transition band of the filter (between pass-band and stop-band) and the level of these products does not obey the expected 1:3 input / output level ratio. The roofing filter in the 7030 is a four-pole fundamental mode crystal filter with 15kHz bandwidth at 45MHz made up of two monolithic dipoles. Its input and output impedances are matched to 500 ohms and it is driven from a heavily damped tank circuit. A 0dBm signal into the receiver's antenna input at the tuned frequency produces 510mV of IF signal at the input to the filter and 380mV at its output.

AM Radio Signal-To-Noise Measurements

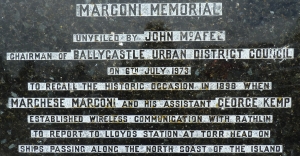

The basic rule for measuring signal to noise ratio in an AM receiver was laid down years ago and taught to all budding radio engineers by companies such as the Marconi Company.

The measurement is taken for any given modulated RF signal by measuring the ratio between the audio output with an unmodulated carrier and the audio output with the modulation applied. You do NOT turn the whole input signal on and off to make the measurement as you would do for SSB or CW S/N ratio measurement.

The reason for this approach is that if you assume a diode (or diode type) detector having a non-linear region at the lower part of its V/I curve, and the detector is operating under no-signal conditions, the generated noise within the receiver will appear symmetrically disposed about the V (Y) axis of the detector characteristic. The detector sees the noise as a signal having 100% modulation by random noise and demodulates it by effectively using half wave detection.

This means that the baseband audio coming out of the detector has a peak value of half the incoming noise (half wave rectification). If you now add a low level RF signal into the receiver, this moves the signal arriving at the detector along the X axis, and appears to the detector as a small signal still 100% modulated by random noise.

If you plot the output from the detector under these conditions you will see that the baseband which was half the p-p value of the noise with no added signal will now reach a p-p value of twice the no-signal condition, because all of the noise component is now demodulated. Proof of this is to measure the no-signal audio output with a true rms meter (like the HP 3400A) and then introduce an RF unmodulated carrier at a low level.

As this is increased you will see the audio output rise to - guess what? 3dB more than the no-signal output. When you tune in to a low level carrier in AM mode, the receiver noise increases, and that's what you are hearing. Now, if you add 30% modulation to the low level carrier when the noise output is at the 3dB point, you will not, in most normal circumstances, see or hear any increase in the audio output because the 30% modulation is much smaller than the 100% noise modulation.

If you listen to the receiver at the same time, a practice which should always be observed, you may hear the faintest whisper of the modulation, but often you won't hear it at all. The result of all this is that employing the same technique to measure noise floor as we commonly and correctly do in an SSB receiver, i.e. by switching the incoming signal on and off to determine when the RF produces the same audio level as the internal noise of the receiver, the measured 3dB increase in the AM receiver is actually the internal noise being fully demodulated and has nothing whatever to do with the modulation on the incoming signal.

Why doesn't the SSB receiver behave the same as the AM receiver? Because the SSB receiver uses a product detector of some sort which is linear and not non-linear as in the case of the AM detector.

Points to ponder. If you demodulate AM using a synchronous detector, or a homodyne detector where the incoming carrier is replaced by one generated in the receiver - as in the case of an SSB product detector, the detector is operating in a linear mode. The use of a synchronous detector therefore should allow you to measure the true AM noise floor of the receiver. I checked this using the synchronous detector in the AR-7030 and it seems to be true.

When it comes to measuring an AM signal to noise ratio of, say, 10dB then the technique is the same. You leave the unmodulated carrier on all the time and switch the modulation on and off until you get the 10dB ratio in audio output. What you have to be careful about is the agc action of the receiver you are testing, because if the agc has a low threshold, you may well be into agc at the point you are measuring the 10dB ratio, and this can lead to erroneous results. In this respect, you must (if you can) switch off the agc in order to ensure that the measurement is correct.

I checked a Collins 51S-1, Kenwood R-820, and a Cubic 3030A, since all these receivers have diode or diode-like detectors. I then checked a W-J 8711 and RA 1792 because one uses DSP demodulation and the other a homodyne detector. The results are quite unambiguous. Measured using the signal on/off method to determine the 3dB increase in output from an unmodulated signal, the 51S-1 came up at -126dBm, the R-820 at -130dBm and the 3030A at -128dBm.

Adding 30% modulation to the input signal at these levels did not increase the audio output, and the modulation was virtually inaudible. Now I measured the same receivers for a 3dB increase in audio output by the Marconi method, leaving the carrier on all the time and looking for a 3dB increase in output between modulation on and modulation off.

The results were now: 51S-1 -115dBm, R-820 -117dBm and the Cubic 3030A -117dBm.

THIS IS THE TRUE 3dB MEASUREMENT and shows a difference of about 11dB from the carrier on/off method.

The results for the non-diode detectors with an unmodulated carrier on/off showed 3dB noise output increase at -116dBm for the RA-1792 and at -115dBm for the 8711. Repeat tests using the carrier on continuously and looking for a 3dB increase with modulation on/off gave -118dBm for the RA-1792 and -112dBm for the 8711.

In other words, for receivers using DSP or homodyne/reinserted carrier type AM detectors, there is virtually no difference in results between the two measurement methods - which is what I expected if the Marconi explanation for the diode-like detectors was correct.

Summary so far. If a receiver under test uses a diode or diode-like detector then attempting to measure noise floor using the carrier on/off method could overstate the noise floor level by some 11dB, whereas the linear type of AM detectors will give the correct result.

The way to remove any doubt in these measurements is to use the Marconi methods and leave the unmodulated carrier on all the time, and measure the signal/noise ratio at the noise floor by looking at the difference between modulation on and modulation off - always remembering to disable the agc system if this is possible.

So far, so good. Now let me address the measurement of signal to noise ratios at the 10dB level. Put fairly simply, when I got to a 10dB ratio there was no significant difference in results between switching the modulation on and off and switching the entire modulated signal on and off, but this may only be true for the particular receivers I tested.

The common sense approach must be to employ the modulation on/off with constant carrier method which I have described as Marconi simply because that is my background, but may be confirmed by anyone having a copy of Ulrich Rohde's book Communications receivers, Principles and Design who cares to take a look at section 2.3, pp65 and 66 where he re-states the method of AM signal to noise ratio measurement, and I for one am not going to argue with Ulrich Rohde on this matter.

Happy reading - John Wilson